You could use command line to run Spark commands, but it is not very convenient. PySpark welcome message on running `pyspark` Run pyspark command and you will get to this: Run source ~/.bash_profile to source this file or open a new terminal to auto-source this file. These commands tell the bash how to use the recently installed Java and Spark packages. bash_profile (if you are using vim, you can do vim ~/.bash_profile to edit this file) export JAVA_HOME=$(/usr/libexec/java_home) export SPARK_HOME=~/spark-2.3.0-bin-hadoop2.7 export PATH=$SPARK_HOME/bin:$PATH export PYSPARK_PYTHON=python3 To tell the bash how to find Spark package and Java SDK, add following lines to your.

So, install Java 8 JDK and move to the next step. The recommended solution was to install Java 8. Not many people were talking about this error, and after reading several Stack Overflow posts, I came across this post which talked about how Spark 2.2.1 was having problems with Java 9 and beyond. My initial guess was it had to do something with Py4J installation, which I tried re-installing a couple of times without any help. This actually resulted in several errors such as the following when I tried to run collect() or count() in my Spark cluster: 4JJavaError: An error occurred while calling z. Several instructions recommended using Java 8 or later, and I went ahead and installed Java 10. Using the link above, I went ahead and downloaded the spark-2.3.0-bin-hadoop2.7.tgz and stored the unpacked version in my home directory. You can download the full version of Spark from the Apache Spark downloads page. This Python packaged version of Spark is suitable for interacting with an existing cluster (be it Spark standalone, YARN, or Mesos) - but does not contain the tools required to setup your own standalone Spark cluster. The Python packaging for Spark is not intended to replace all of the other use cases.

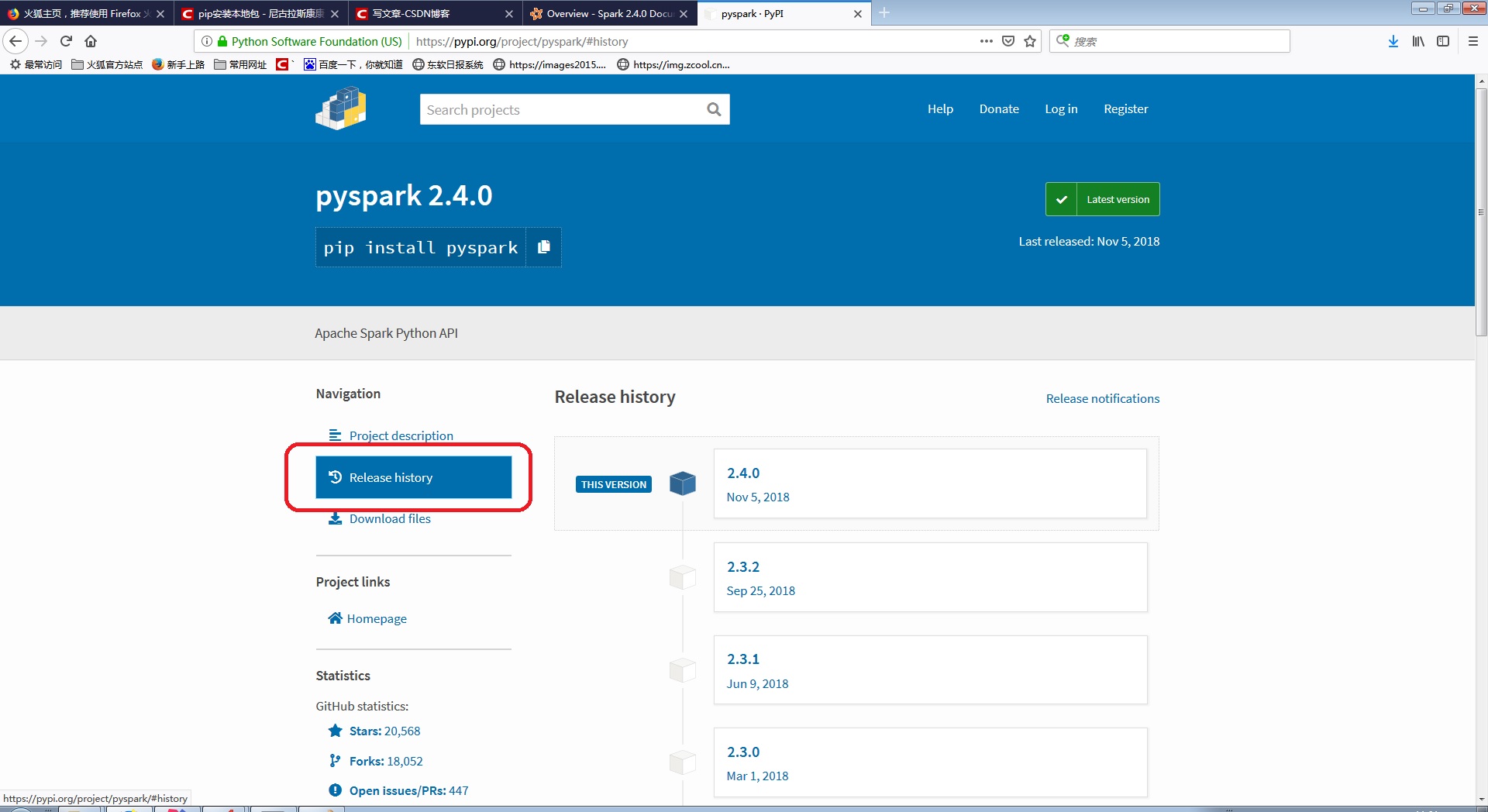

Reading several answers on Stack Overflow and the official documentation, I came across this: You could try using pip to install pyspark but I couldn’t get the pyspark cluster to get started properly. Activate the environment with source activate pyspark_env 2. You can install Anaconda and if you already have it, start a new conda environment using conda create -n pyspark_env python=3 This will create a new conda environment with latest version of Python 3 for us to try our mini-PySpark project.

These steps are for Mac OS X (I am running OS X 10.13 High Sierra), and for Python 3.6. How do we analyze all the “big data” around us?

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed